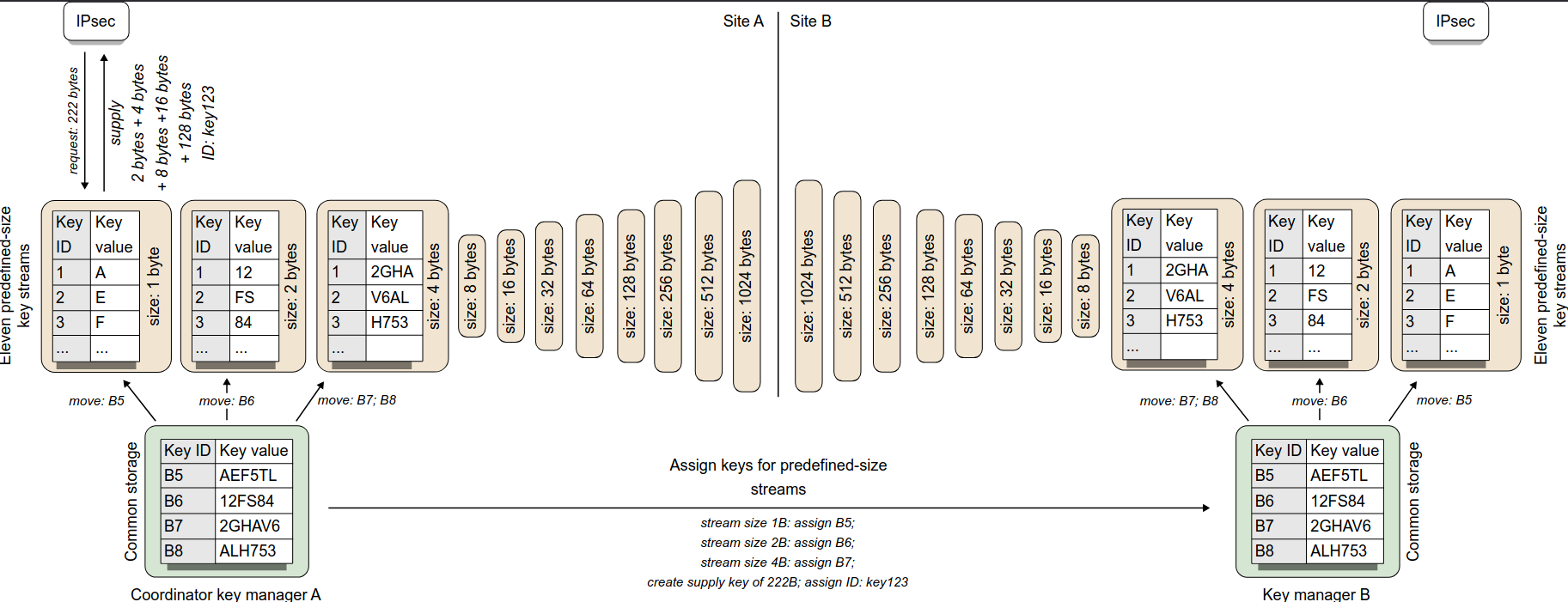

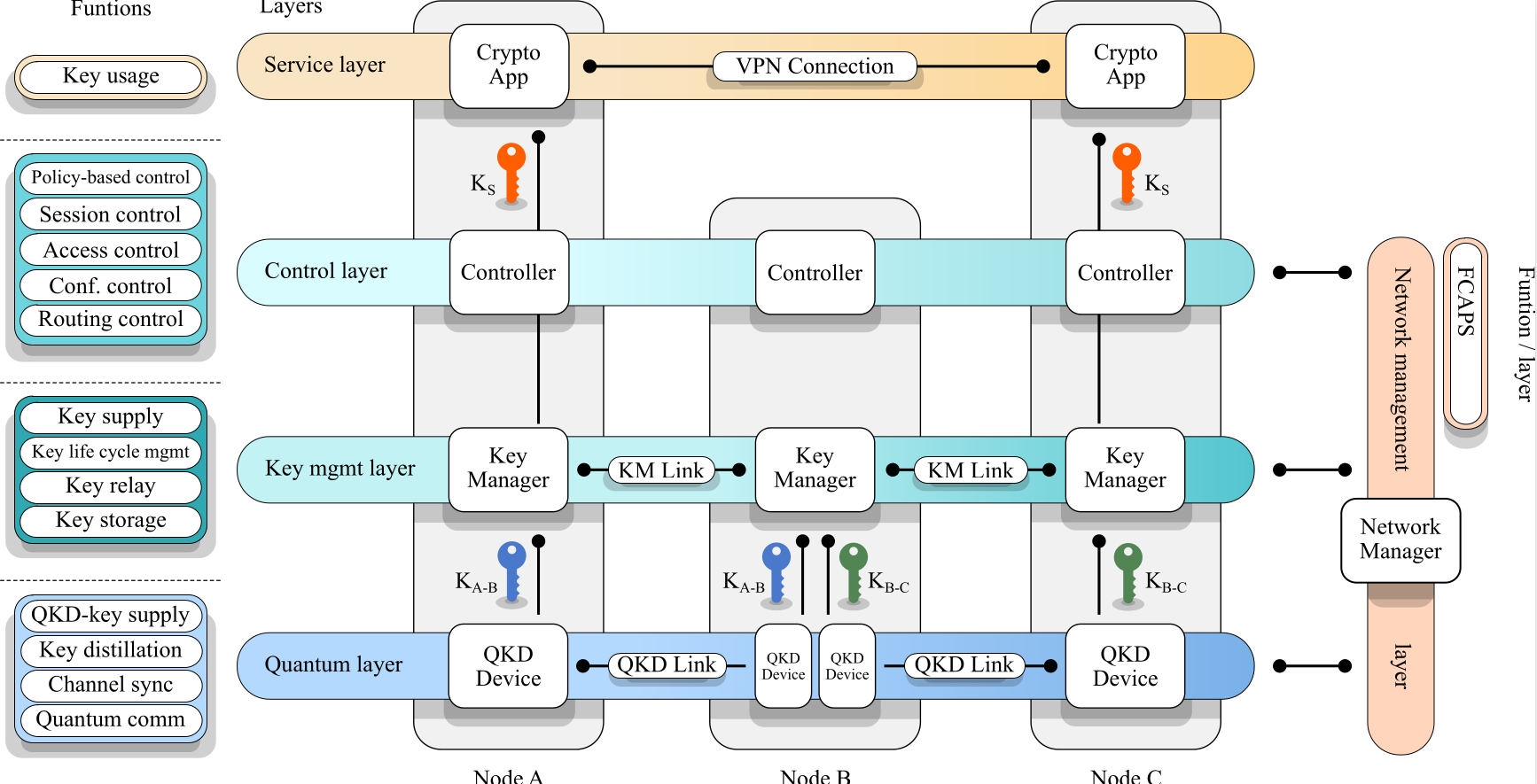

Department of Telecommunications participates in the EU MCSA-DN QUESTING project

New article published in Journal of Optical Communications and Networking

General Expression for Azimuthal Angle Distribution of Various Elliptical-Shaped Geometry-Based Stochastic Channel Models

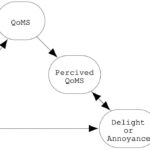

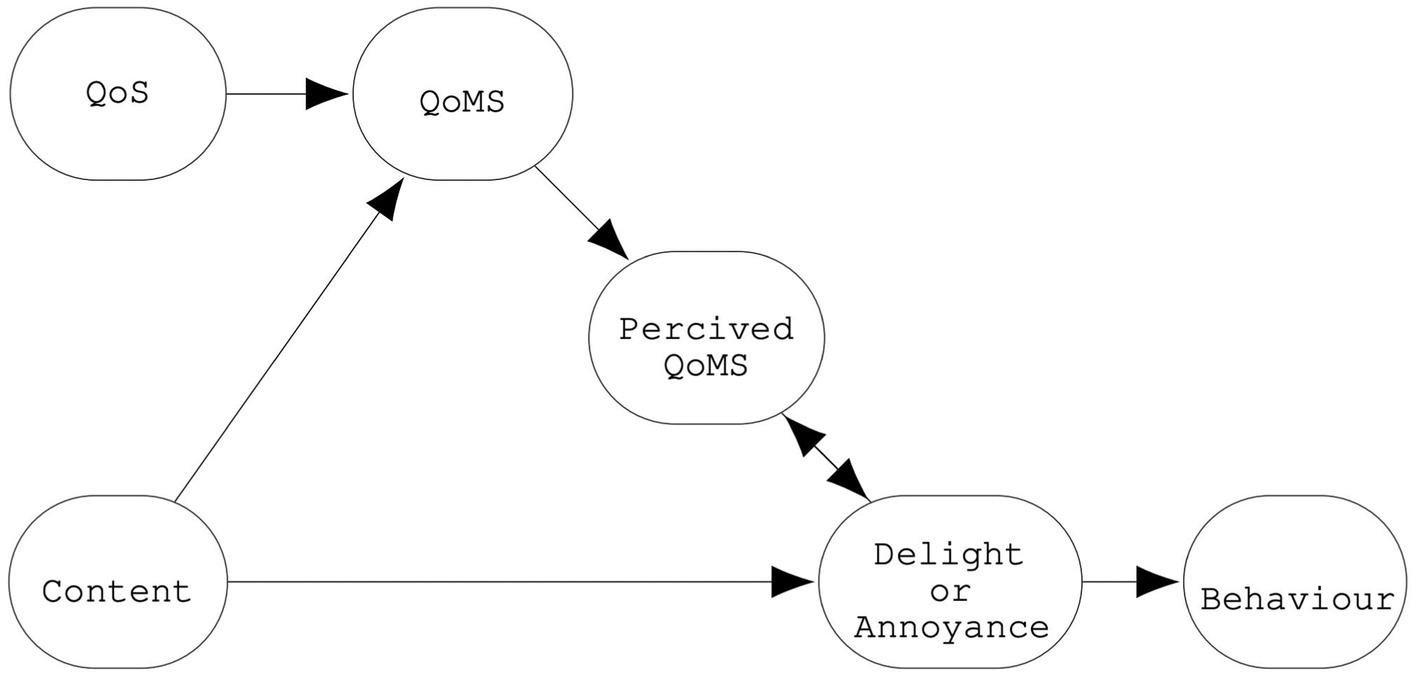

A research article entitled “Top-down and bottom-up approaches to video quality of experience studies; overview and proposal of a new model” published in Frontiers in Computer Science

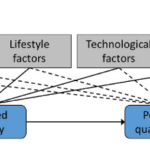

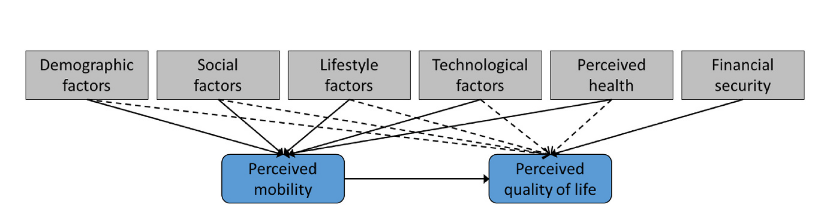

A new article entitled “Influence of of mobility and technological factors of mobility on the quality of life of older adults: An emprical study on mobility as a mediator” published in Journal of Transport & Health

Third-year students of the Department of Telecommunications had visited BHTelecom as part of their teaching activities in Microwave Communication Systems course. You can read more about the visit HERE.

News

IEEE News

- 50 Years of The Institute

The Institute is celebrating its 50th anniversary this year. Launched in 1976, the publication was […]

- 7 Ways New Engineers Can Flourish in the Age of AI

New graduates’ careers are unfolding in an era when AI is not optional. The most successful […]

- What It Takes for Future-Ready Power Distribution

This sponsored article is brought to you by Black & Veatch.The biggest challenge facing […]

About Us

The Department of Telecommunications was founded in 1976, and it is one of four departments of the Faculty of Electrical Engineering, University of Sarajevo. Since 2005, study programs have been harmonized with the Bologna Declaration, and are divided into Bachelor, Master and Doctoral Studies.

The bachelor’s study program, with a duration of three years, is oriented towards fundamentals of engineering practice and telecommunication knowledge, and it is accredited by ASIIN – the German member of European Quality Assurance Association in Higher Education (ENQA). On the other hand, the master’s study program with a duration of two years is oriented towards practical engineering work and scientific-research activities.