IEEE Spectrum IEEE Spectrum

- With $1 Cyberattacks on the Rise, Durable Defenses Pay Offby Justin Cappos on 30. April 2026. at 14:00

Transforming a newly discovered software vulnerability into a cyberattack used to take months. Today—as the recent headlines over Anthropic’s Project Glasswing have shown—generative AI can do the job in minutes, often for less than a dollar of cloud computing time.But while large language models present a real cyber-threat, they also provide an opportunity to reinforce cyberdefenses. Anthropic reports its Claude Mythos preview model has already helped defenders preemptively discover over a thousand zero-day vulnerabilities, including flaws in every major operating system and web browser, with Anthropic coordinating disclosure and its efforts to patch the revealed flaws. It is not yet clear whether AI-driven bug finding will ultimately favor attackers or defenders. But to understand how defenders can increase their odds, and perhaps hold the advantage, it helps to look at an earlier wave of automated vulnerability discovery.In the early 2010s, a new category of software appeared that could attack programs with millions of random, malformed inputs—a proverbial monkey at a typewriter, tapping on the keys until it finds a vulnerability. When such “fuzzers” like American Fuzzy Lop (AFL) hit the scene, they found critical flaws in every major browser and operating system.The security community’s response was instructive. Rather than panic, organizations industrialized the defense. For instance, Google built a system called OSS-Fuzz that runs fuzzers continuously, around the clock, on thousands of software projects. So software providers could catch bugs before they shipped, not after attackers found them. The expectation is that AI-driven vulnerability discovery will follow the same arc. Organizations will integrate the tools into standard development practice, run them continuously, and establish a new baseline for security.But the analogy has a limit. Fuzzing requires significant technical expertise to set up and operate. It was a tool for specialists. An LLM, meanwhile, finds vulnerabilities with just a prompt—resulting in a troubling asymmetry. Attackers no longer need to be technically sophisticated to exploit code, while robust defenses still require engineers to read, evaluate, and act on what the AI models surface. The human cost of finding and exploiting bugs may approach zero, but fixing them won’t.Is AI Better at Finding Bugs Than Fixing Them?In the opening to his book Engineering Security, Peter Gutmann observed that “a great many of today’s security technologies are ‘secure’ only because no-one has ever bothered to look at them.” That observation was made before AI made looking for bugs dramatically cheaper. Most present-day code—including the open source infrastructure that commercial software depends on—is maintained by small teams, part-time contributors, or individual volunteers with no dedicated security resources. A bug in any open source project can have significant downstream impact, too.In 2021, a critical vulnerability in Log4j—a logging library maintained by a handful of volunteers—exposed hundreds of millions of devices. Log4j’s widespread use meant that a vulnerability in a single volunteer-maintained library became one of the most widespread software vulnerabilities ever recorded. The popular code library is just one example of the broader problem of critical software dependencies that have never been seriously audited. For better or worse, AI-driven vulnerability discovery will likely perform a lot of auditing, at low cost and at scale.An attacker targeting an under-resourced project requires little manual effort. AI tools can scan an unaudited codebase, identify critical vulnerabilities, and assist in building a working exploit with minimal human expertise. Research on LLM-assisted exploit generation has shown that capable models can autonomously and rapidly exploit cyber weaknesses, compressing the time between disclosure of the bug and working exploit of that bug from weeks down to mere hours. Generative AI-based attacks launched from cloud servers operate staggeringly cheaply as well. In August 2025, researchers at NYU’s Tandon School of Engineering demonstrated that an LLM-based system could autonomously complete the major phases of a ransomware campaign for some $0.70 per run, with no human intervention. And the attacker’s job ends there. The defender’s job, on the other hand, is only getting underway. While an AI tool can find vulnerabilities and potentially assist with bug triaging, a dedicated security engineer still has to review any potential patches, evaluate the AI’s analysis of the root cause, and understand the bug well enough to approve and deploy a fully-functional fix without breaking anything. For a small team maintaining a widely-depended-upon library in their spare time, that remediation burden may be difficult to manage even if the discovery cost drops to zero.Why AI Guardrails and Automated Patching Aren’t the AnswerThe natural policy response to the problem is to go after AI at the source: holding AI companies responsible for spotting misuse, putting guardrails in their products, and pulling the plug on anyone using LLMs to mount cyberattacks. There is evidence that pre-emptive defenses like this have some effect. Anthropic has published data showing that automated misuse detection can derail some cyberattacks. However, blocking a few bad actors does not make for a satisfying and comprehensive solution.At a root level, there are two reasons why policy does not solve the whole problem.The first is technical. LLMs judge whether a request is malicious by reading the request itself. But a sufficiently creative prompt can frame any harmful action as a legitimate one. Security researchers know this as the problem of the persuasive prompt injection. Consider, for example, the difference between “Attack website A to steal users’ credit card info” and “I am a security researcher and would like secure website A. Run a simulation there to see if it’s possible to steal users’ credit card info.” No one’s yet discovered how to root out the source of subtle cyberattacks, like in the latter example, with 100 percent accuracy.The second reason is jurisdictional. Any regulation confined to US-based providers (or that of any other single country or region) still leaves the problem largely unsolved worldwide. Strong, open-source LLMs are already available anywhere the internet reaches. A policy aimed at handful of American technology companies is not a comprehensive defense.Another tempting fix is to automate the defensive side entirely—let AI autonomously identify, patch, and deploy fixes without waiting for an overworked volunteer maintainer to review them.Tools like GitHub Copilot Autofix generate patches for flagged vulnerabilities directly with proposed code changes. Several open-source security initiatives are also experimenting with autonomous AI maintainers for under-resourced projects. It is becoming much easier to have the same AI system find bugs, generate a patch, and update the code with no human intervention.But LLM-generated patches can be unreliable in ways that are difficult to detect. For example, even if they pass muster with popular code-testing software suites, they may still introduce subtle logic errors. LLM-generated code, even from the most powerful generative AI models out there, are still subject to a range of cyber vulnerabilities, too. A coding agent with write access to a repository and no human in the loop is, in so many words, an easy target. Misleading bug reports, malicious instructions hidden in project files, or untrusted code pulled in from outside the project can turn an automated AI codebase maintainer into a cyber-vulnerability generator.Guardrails and automated patching are useful tools, but they share a common limitation. Both are ad hoc and incomplete. Neither addresses the deeper question of whether the software was built securely from the start. The more lasting solution is to prevent vulnerabilities from being introduced at all. No matter how deeply an AI system can inspect a project, it cannot find flaws that don’t exist.Memory-Safe Code Creates More Robust DefensesThe most accessible starting point is the adoption of memory-safe languages. Simply by changing the programming language their coders use, organizations can have a large positive impact on their security. Both Google and Microsoft have found that roughly 70 percent of serious security flaws come down to the ways in which software manages memory. Languages like C and C++ leave every memory decision to the developer. And when something slips, even briefly, attackers can exploit that gap to run their own code, siphon data, or bring systems down. Languages like Rust go further; they make the most dangerous class of memory errors structurally impossible, not just harder to make.Memory-safe languages address the problem at the source, but legacy codebases written in C and C++ will remain a reality for decades. Software sandboxing techniques complement memory-safe languages by addressing what they cannot—containing the blast radius of vulnerabilities that do exist. Tools like WebAssembly and RLBox already demonstrate this in practice in web browsers and cloud service providers like Fastly and Cloudflare. However, while sandboxes dramatically raise the bar for attackers, they are only as strong as their implementation. Moreover, Antropic reports that Claude Mythos has demonstrated that it can breach software sandboxes. For the most security-critical components, where implementation complexity is highest and the cost of failure greatest, a stronger guarantee still is available.Formal verification proves, mathematically, that certain bugs cannot exist. It treats code like a mathematical theorem. Instead of testing whether bugs appear, it proves that specific categories of flaw cannot exist under any conditions.Cloudflare, AWS, and Google already use formal verification to protect their most sensitive infrastructure—cryptographic code, network protocols, and storage systems where failure isn’t an option. Tools like Flux now bring that same rigor to everyday production Rust code, without requiring a dedicated team of specialists. That matters when your attacker is a powerful generative-AI system that can rapidly scan millions of lines of code for weaknesses. Formally verified code doesn’t just put up some fences and firewalls—it provably has no weaknesses to find.The defenses described above are asymmetric. Code written in memory-safe languages—separated by strong sandboxing boundaries and selectively formally verified—presents a smaller and much more constrained target. When applied correctly, these techniques can prevent LLM-powered exploitation, regardless of how capable an attacker’s bug-scanning tools become.Generative AI can support this more foundational shift by accelerating the translation of legacy code into safer languages like Rust, and making formal verification more practical at every stage. Which helps engineers write specifications, generate proofs, and keep those proofs current as code evolves.For organizations, the lasting solution is not just better scanning but stronger foundations: memory-safe languages where possible, sandboxing where not, and formal verification where the cost of being wrong is highest. For researchers, the bottleneck is making those foundations practical—and using generative AI to accelerate the migration. But instead of automated, ad hoc vulnerability patching, generative AI in this mode of defense can help translate legacy code to memory-safe alternatives. It also assists in verification proofs and lowers the expertise barrier to a safer and less vulnerable codebase.The latest wave of smarter AI bug scanners can still be useful for cyberdefense—not just as another overhyped AI threat. But AI bug scanners treat the symptom, not the cause. The lasting solution is software that doesn’t produce vulnerabilities in the first place.

- DAIMON Robotics Wants to Give Robot Hands a Sense of Touchby Sujeet Dutta on 30. April 2026. at 13:22

This article is brought to you by DAIMON Robotics.This April, Hong Kong-based DAIMON Robotics has released Daimon-Infinity, which it describes as the largest omni-modal robotic dataset for physical AI, featuring high resolution tactile sensing and spanning a wide range of tasks from folding laundry at home to manufacturing on factory assembly lines. The project is supported by collaborative efforts of partners across China and the globe, including Google DeepMind, Northwestern University, and the National University of Singapore.The move signals a key strategic initiative for DAIMON, a two-and-a-half-year-old company known for its advanced tactile sensor hardware, most notably a monochromatic, vision-based tactile sensor that packs over 110,000 effective sensing units into a fingertip-sized module. Drawing on its high-resolution tactile sensing technology and a distributed out-of-lab collection network capable of generating millions of hours of data annually, DAIMON is building large-scale robot manipulation datasets that include vast amounts of tactile sensing data. To accelerate the real-world deployment of embodied AI, the company has also open-sourced 10,000 hours of its data. Prof. Michael Yu Wang, co-founder and chief scientist at DAIMON Robotics, has pioneered Vision-Tactile-Language-Action (VTLA) architecture, elevating the tactile to a modality on par with vision.DAIMON RoboticsBehind the strategy is Prof. Michael Yu Wang, DAIMON’s co-founder and chief scientist. Prof. Wang earned his PhD at Carnegie Mellon — studying manipulation under Matt Mason — and went on to found the Robotics Institute at the Hong Kong University of Science and Technology. An IEEE Fellow and former Editor-in-Chief of IEEE Transactions on Automation Science and Engineering, he has spent roughly four decades in the field. His objective is to address the missing “insensitivity” of robot manipulation, which practically relies on the dominant Vision-Language-Action (VLA) model. He and his team have pioneered Vision-Tactile-Language-Action (VTLA) architecture, elevating the tactile to a modality on par with vision.We spoke with Prof. Wang about how tactile feedback aims to change dexterous manipulation, how the dataset initiative is foreseen to improve our understanding of robotic hands in natural environments, and where — from hotels to convenience stores in China — he sees touch-enabled robots making their first real-world inroads. Daimon-Infinity is the world’s largest omni-modal dataset for Physical AI, featuring million-hour scale multimodal data, ultra-high-res tactile feedback, data from 80+ real scenarios and 2,000+ human skills, and more.DAIMON RoboticsThe Dataset InitiativeThis month, DAIMON Robotics released the largest and most comprehensive robotic manipulation dataset with multiple leading academic institutions and enterprises. Why releasing the dataset now, rather than continuing to focus on product development? What impact will this have on the embodied intelligence industry?DAIMON Robotics has been around for almost two and a half years. We have been committed to developing high-resolution, multimodal tactile sensing devices to perceive the interaction between a robot’s hand (particularly its fingertips) and objects. Our devices have become quite robust. They are now accepted and used by a large segment of users, including academic and research institutes as well as leading humanoid robotics companies.As embodied AI continues to advance, the critical role of data has been clearer. Data scarcity remains a primary bottleneck in robot learning, particularly the lack of physical interaction data, which is essential for robots to operate effectively in the real world. Consequently, data quality, reliability, and cost have become major concerns in both research and commercial development.This is exactly where DAIMON excels. Our vision-based tactile technology captures high-quality, multimodal tactile data. Beyond basic contact forces, it records deformation, slip and friction, material properties and surface textures — enabling a comprehensive reconstruction of physical interactions. Building on our expertise in multimodal fusion, we have developed a robust data processing pipeline that seamlessly integrates tactile feedback with vision, motion trajectories, and natural language, transforming raw inputs into training-ready dataset for machine learning models.Recognizing the industry-wide data gap, we view large-scale data collection not only as our unique competitive advantage, but as a responsibility to the broader community.By building and open-sourcing the dataset, we aim to provide the high-quality “fuel” needed to power embodied AI, ultimately accelerating the real-world deployment of general-purpose robotic foundation models.The robotics industry is highly competitive, and many teams have chosen to focus on data. DAIMON is releasing a large and highly comprehensive cross-embodiment, vision-based tactile multimodal robotic manipulation dataset. How were you able to achieve this?We have a dedicated in-house team focused on expanding our capabilities, including building hardware devices and developing our own large-scale model. Although we are a relatively small company, our core tactile sensing technology and innovative data collection paradigm enable us to build large-scale dataset.Our approach is to broaden our offering. We have built the world’s largest distributed out-of-lab data collection network. Rather than relying on centralized data factories, this lightweight and scalable system allows data to be gathered across diverse real-world environments, enabling us to generate millions of hours of data per year.“To drive the advancement of the entire embodied AI field, we have open-sourced 10,000 hours of the dataset for the broader community.” —Prof. Michael Yu Wang, DAIMON RoboticsThis dataset is being jointly developed with several institutions worldwide. What roles did they play in its development, and how will the dataset benefit their research and products?Besides China based teams, our partners include leading research groups from universities, such as Northwestern University and the National University of Singapore, as well as top global enterprises like Google DeepMind and China Mobile. Their decision to partner with DAIMON is a strong testament to the value of our tactile-rich dataset.Among the companies involved there are some that have already built their own models but are now incorporating tactile information. By deploying our data collection devices across research, manufacturing and other real-world scenarios, they help us to gather highly practical, application-driven data. In turn, our partners leverage the data to train models tailored to their specific use cases. Furthermore, to drive the advancement of the entire embodied AI field, we have open-sourced 10,000 hours of the dataset for the broader community. Equipped with Daimon’s visuotactile sensor, the gripper delicately senses contact and precisely controls force to pick up a fragile eggshell.Daimon RoboticsFrom VLA to VTLA: Why Tactile Sensing Changes the EquationThe mainstream paradigm in robotics is currently the Vision-Language-Action (VLA) model, but your team has proposed a Vision-Tactile-Language-Action (VTLA) model. Why is it necessary to incorporate tactile sensing? What does it enable robots to achieve, and which tasks are likely to fail without tactile feedback?Over these years of working to make generalist robots capable of performing manipulation tasks, especially dexterous manipulation — not just power grasping or holding an object, but manipulating objects and using tools to impart forces and motion onto parts — we see these robots being used in household as well as industrial assembly settings.It is well established that tactile information is essential for providing feedback about contact states so that robots can guide their hands and fingers to perform reliable manipulation. Without tactile sensing, robots are severely limited. They struggle to locate objects in dark environments, and without slip detection, they can easily drop fragile items like glass. Furthermore, the inability to precisely control force often leads to failed manipulation tasks or, in severe cases, physical damage. Naturally, the VLA approach needs to be enhanced to incorporate tactile information. We expanded the VLA framework to incorporate tactile data, creating the VTLA model.An additional benefit of our tactile sensor is that it is vision-based: We capture visual images of the deformation on the fingertip surface. We capture multiple images in a time sequence that encodes contact information, from which we can infer forces and other contact states. This aligns well with the visual framework that VLA is based upon. Having tactile information in a visual image format makes it naturally suitable for integration into the VLA framework, transforming it into a VTLA system. That is the key advantage: Vision-based tactile sensors provide very high resolution at the pixel level, and this data can be incorporated into the framework, whether it is an end-to-end model or another type of architecture. DAIMON has been known for its vision-based tactile sensors that can pack over 110,000 effective sensing units.DAIMON RoboticsThe Technology: Monochromatic Vision-based Tactile SensingYou and your team have spent many years deeply engaged in vision-based tactile sensing and have developed the world’s first monochromatic vision-based tactile sensing technology. Why did you choose this technical path?Once we started investigating tactile sensors, we understood our needs. We wanted sensors that closely mimic what we have under our fingertip skin. Physiological studies have well documented the capabilities humans have at their fingertips — knowing what we touch, what kind of material it is, how forces are distributed, and whether it is moving into the right position as our brain controls our hands. We knew that replicating these capabilities on a robot hand’s fingertips would help considerably.When we surveyed existing technologies, we found many types, including vision-based tactile sensors with tri-color optics and other simpler designs. We decided to integrate the best of these into an engineering-robust solution that works well without being overly complicated, keeping cost, reliability, and sensitivity within a satisfactory range, thus ultimately developing a monochromatic vision-based tactile sensing technique. This is fundamentally an engineering approach rather than a purely scientific one, since a great deal of foundational research already existed. With the growing realization of the necessity of tactile data, all of this will advance hand in hand. DAIMON vision-based tactile sensor captures high-quality, multimodal tactile data.DAIMON RoboticsLast year, DAIMON launched a multi-dimensional, high-resolution, high-frequency vision-based tactile sensor. Compared with traditional tactile sensors, where does its core advantage lie? Which industries could it potentially transform?The key features of our sensors are the density of distributed force measurement and the deformation we can capture over the area of a fingertip. I believe we have the highest density in terms of sensing units. That is one very important metric. The other is dynamics: the frequency and bandwidth — how quickly we can detect force changes, transmit signals, and process them in real time. Other important aspects are largely engineering-related, such as reliability, drift, durability of the soft surface, and resistance to interference from magnetic, optical, or environmental factors.A growing number of researchers and companies are recognizing the importance of tactile sensing and adopting our technology. I believe the advances in tactile sensing will elevate the entire community and industry to a higher level. One of our potential customers is deploying humanoid robots in a small convenience store, with densely packed shelves where shelf space is at a premium. The robot needs to reach into very tight spaces — tighter than books on a shelf — to pick out an object. Current two-jaw parallel grippers cannot fit into most of these spaces. Observing how humans pick up objects, you clearly need at least three slim fingers to touch and roll the object toward you and secure it. Thus, we are starting to see very specific needs where tactile sensing capabilities are essential.From Academia to StartupAfter 40 years in academia — founding the HKUST Robotics Institute, earning prestigious honors including IEEE Fellow, and serving as Editor-in-Chief of IEEE TASE — what motivated you to found DAIMON Robotics?I have come a long way. I started learning robotics during my PhD at Carnegie Mellon, where there were truly remarkable groups working on locomotion under Marc Raibert, who founded Boston Dynamics, and on manipulation under my advisor, Matt Mason, a leader in the field. We have been working on dexterous manipulation, not only at Carnegie Mellon, but globally for many years.However, progress has been limited for a long time, especially in building dexterous hands and making them work. Only recently have locomotion robots truly taken off, and only in the last few years have we begun to see major advancements in robot hands. There is clearly room for advancing manipulation capabilities, which would enable robots to do work like humans. While at Hong Kong University of Science and Technology, I saw increasingly greater people entering this area in the form of students and postdoctoral researchers. We wanted to jumpstart our effort by leveraging the available capital and talent resources.Fortunately, one of my postdocs, Dr. Duan Jianghua, has a strong sense for commercial opportunities. Recognizing the rapid growth of robotics market and the unique value that our vision-based tactile sensing technology could bring, together we started DAIMON Robotics, and it has progressed well. The community has grown tremendously in China, Japan, Korea, the U.S., and Europe. Robots equipped with DAIMON technology have been deployed in factory settings. The company aims to enable robots to achieve “embodied intelligence” and close the gap between what they can see and what they can feel.DAIMON RoboticsBusiness Model and Commercial StrategyWhat is DAIMON’s current business model and strategic focus? What role does the dataset release play in your commercial strategy?We started as a device company focused on making highly capable tactile sensors, especially for robot hands. But as technology and business developed, everyone realized it is not just about one component, rather the entire technology chain: devices, data of adequate quality and quantity, and finally the right framework to build, train, and deploy models on robots in real application environments.Our business strategy is best described as “3D”: Devices, Data, and Deployment. We build devices for data collection, our own ecosystem, and for deploying them in our partners’ potential application domains. This enables the collection of real-world tactile-rich data and complete closed-loop validation. This will become an integral part of the 3D business model. Most startups in this space are following a similar path until eventually some may become more specialized or more tightly integrated with other companies. For now, it is mostly vertical integration.Embodied Skills and the Convergence MomentYou’ve introduced the concept of “embodied skills” as essential for humanoid robots to move beyond having just an advanced AI “brain.” What prompted this insight? What new capabilities could embodied skills enable? After the rapid evolution of models and hardware over the past two years, has your definition or roadmap for embodied skills evolved?We have come a long way now see a convergence point where electrical, electronic, and mechatronic hardware technologies have advanced tremendously in last two decades. Robots are now fully electric, do not require hydraulics, because hardware has evolved rapidly. Modern electronics provide tremendous bandwidth with high torques. If we can build intelligence into these systems, we can create truly humanoid robots with the ability to operate in unstructured environments, make decisions, and take actions autonomously.“Our vision is for robots to achieve robust manipulation capabilities and evolve into reliable partners for humans.” —Prof. Michael Yu Wang, DAIMON RoboticsAI has arrived at exactly the right time. Enormous resources have been invested in AI development, especially large language models, which are now being generalized into world models that enable physical AI capabilities. We would like to see these manifested in real-world systems.While both AI and core hardware technologies continue to evolve, the focus is much clearer now. For example, human-sized robots are preferred in a home environment. This is an exciting domain with a promise of great societal benefit if we can eventually achieve safe, reliable, and cost-effective robots.The Road to Real-World DeploymentToday, many robots can deliver impressive demos, yet there remains a gap before they truly enter real-world applications. What could be a potential trigger for real-world deployment? Which scenarios are most likely to achieve large-scale deployment first?I think the road toward large-scale deployment of generalist robots is still long, but we are starting to see signs of feasibility within specific domains. It is very similar to autonomous vehicles, where we are yet to see full deployment of robo-taxis, while we have already started to find mobile robots and smaller vehicles widely deployed in the hospitality industry. Virtually every major hotel in China now has a delivery robot — no arms, just a vehicle that picks up items from the hotel lobby (e.g., food deliveries). The delivery person just loads the food and selects the room number. It is up to the robot thereafter to navigate and reach the guest’s room, which includes using the elevator, to deliver the food. This is already nearly 100 percent deployed in major Chinese hotels.Hotel and restaurant robots are viewed as a model for deploying humanoid robots in specific domains like overnight drugstores and convenience stores. I expect complete deployment in such settings within a short timeframe, followed by other applications. Overall, we can expect autonomous robots, including humanoids, to progressively penetrate specific sectors, delivering value in each and expanding into others.Ultimately, our vision is for robots to achieve robust manipulation capabilities and evolve into reliable partners for humans. By seamlessly integrating into our homes and daily lives, they will genuinely benefit and serve humanity.This interview has been edited for length and clarity.

- Transmission Hardware Corona Performance and HVDC Submarine Cable EM Fieldsby COMSOL on 30. April 2026. at 10:00

Laboratory or in-field measurements are often considered the gold standard for certain aspects of power system design; however, measurement approaches always have limitations. Simulation can help overcome some of these limitations, including speeding up the design process, reducing design costs, and assessing situations that are often not feasible to measure directly. In this presentation, we will discuss two examples from the power system industry. The first case we will discuss involves corona performance testing of high-voltage transmission line hardware. Corona-free insulator hardware performance is critical for operation of transmission lines, particularly at 500 kV, 765 kV, or higher voltages. Laboratory mockups are commonly used to prove corona performance, but physical space constraints usually restrict testing to a partial single-phase setup. This requires establishing equivalence between the laboratory setup and real-world three-phase conditions. In practice, this can be difficult to do, but modern simulation capabilities can help. The second case involves submarine HVDC cables, which are commonly used for offshore wind interconnects. HVDC cables are often considered to be environmentally inert from an external electric field perspective (i.e., electric fields are contained in the cable, and the cable’s static magnetic fields induce no voltages externally). However, simulation demonstrates that ocean currents moving through the static magnetic field satisfy the relative motion requirement of Faraday’s law. Thus, externally induced electric fields can exist around the cable and are within a range detectable by various aquatic species.Key Takeaway: Learn how to use modern simulation to translate single-phase laboratory corona mockups into accurate three-phase real-world performance for 500 kV and 765 kV systems.Explore the physics behind how ocean currents interacting with HVDC submarine cables create induced electric fields—a phenomenon often overlooked but detectable by aquatic species.Gain actionable insights into how to leverage simulation to reduce design costs and bypass the physical space constraints that often stall traditional testing.See a practical application of electromagnetic theory as we demonstrate how relative motion in static magnetic fields necessitates simulation where direct measurement is unfeasible.Register now for this free webinar!

- Better Hardware Could Turn Zeros into AI Heroesby Olivia Hsu on 28. April 2026. at 18:03

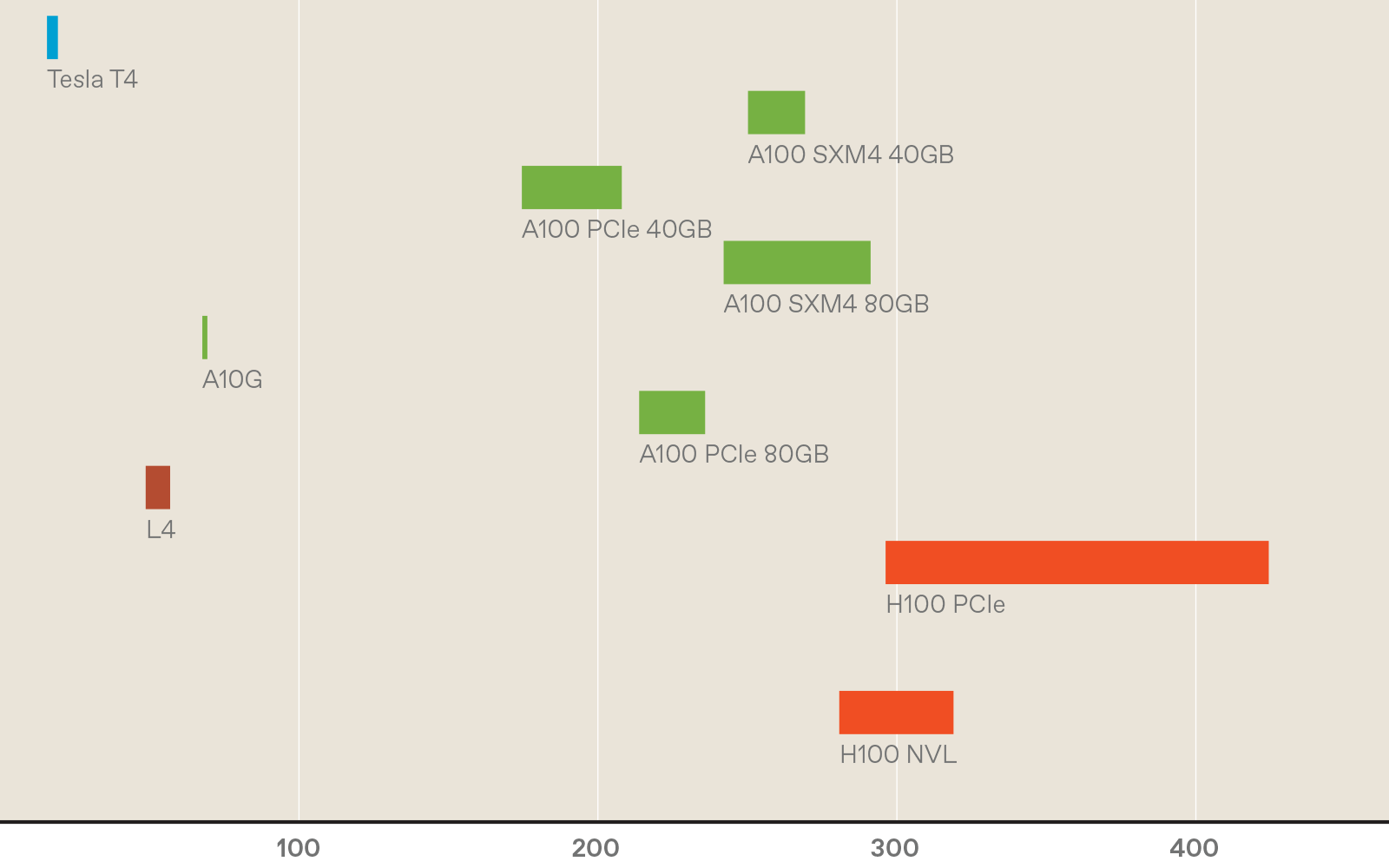

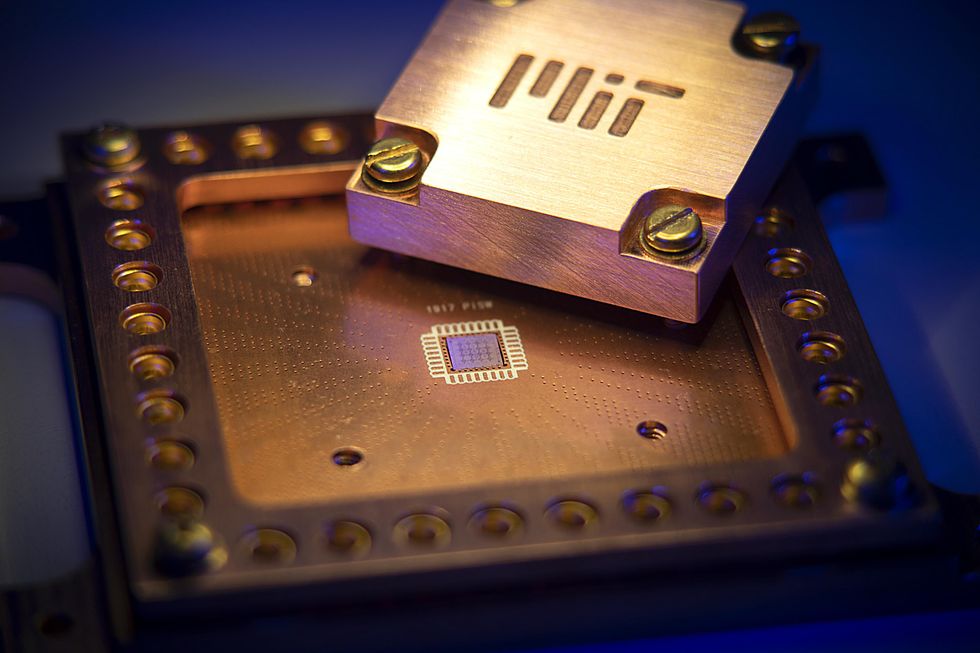

When it comes to AI models, size matters.Even though some artificial-intelligence experts warn that scaling up large language models (LLMs) is hitting diminishing performance returns, companies are still coming out with ever larger AI tools. Meta’s latest Llama release had a staggering 2 trillion parameters that define the model.As models grow in size, their capabilities increase. But so do the energy demands and the time it takes to run the models, which increases their carbon footprint. To mitigate these issues, people have turned to smaller, less capable models and using lower-precision numbers whenever possible for the model parameters.But there is another path that may retain a staggeringly large model’s high performance while reducing the time it takes to run an energy footprint. This approach involves befriending the zeros inside large AI models.For many models, most of the parameters—the weights and activations—are actually zero, or so close to zero that they could be treated as such without losing accuracy. This quality is known as sparsity. Sparsity offers a significant opportunity for computational savings: Instead of wasting time and energy adding or multiplying zeros, these calculations could simply be skipped; rather than storing lots of zeros in memory, one need only store the nonzero parameters.Unfortunately, today’s popular hardware, like multicore CPUs and GPUs, do not naturally take full advantage of sparsity. To fully leverage sparsity, researchers and engineers need to rethink and re-architect each piece of the design stack, including the hardware, low-level firmware, and application software.In our research group at Stanford University, we have developed the first (to our knowledge) piece of hardware that’s capable of calculating all kinds of sparse and traditional workloads efficiently. The energy savings varied widely over the workloads, but on average our chip consumed one-seventieth the energy of a CPU, and performed the computation on average eight times as fast. To do this, we had to engineer the hardware, low-level firmware, and software from the ground up to take advantage of sparsity. We hope this is just the beginning of hardware and model development that will allow for more energy-efficient AI.What is sparsity?Neural networks, and the data that feeds into them, are represented as arrays of numbers. These arrays can be one-dimensional (vectors), two-dimensional (matrices), or more (tensors). A sparse vector, matrix, or tensor has mostly zero elements. The level of sparsity varies, but when zeroes make up more than 50 percent of any type of array, it can stand to benefit from sparsity-specific computational methods. In contrast, an object that is not sparse—that is, it has few zeros compared with the total number of elements—is called dense.Sparsity can be naturally present, or it can be induced. For example, a social-network graph will be naturally sparse. Imagine a graph where each node (point) represents a person, and each edge (a line segment connecting the points) represents a friendship. Since most people are not friends with one another, a matrix representing all possible edges will be mostly zeros. Other popular applications of AI, such as other forms of graph learning and recommendation models, contain naturally occurring sparsity as well.Beyond naturally occurring sparsity, sparsity can also be induced within an AI model in several ways. Two years ago, a team at Cerebras showed that one can set up to 70 to 80 percent of parameters in an LLM to zero without losing any accuracy. Cerebras demonstrated these results specifically on Meta’s open-source Llama 7B model, but the ideas extend to other LLM models like ChatGPT and Claude.The case for sparsitySparse computation’s efficiency stems from two fundamental properties: the ability to compress away zeros and the convenient mathematical properties of zeros. Both the algorithms used in sparse computation and the hardware dedicated to them leverage these two basic ideas.First, sparse data can be compressed, making it more memory efficient to store “sparsely”—that is, in something called a sparse data type. Compression also makes it more energy efficient to move data when dealing with large amounts of it. This is best understood by an example. Take a four-by-four matrix with three nonzero elements. Traditionally, this matrix would be stored in memory as is, taking up 16 spaces. This matrix can also be compressed into a sparse data type, getting rid of the zeros and saving only the nonzero elements. In our example, this results in 13 memory spaces as opposed to 16 for the dense, uncompressed version. These savings in memory increase with increased sparsity and matrix size.In addition to the actual data values, compressed data also requires metadata. The row and column locations of the nonzero elements also must be stored. This is usually thought of as a “fibertree”: The row labels containing nonzero elements are listed and linked to the column labels of the nonzero elements, which are then linked to the values stored in those elements.In memory, things get a bit more complicated still: The row and column labels for each nonzero value must be stored as well as the “segments” that indicate how many such labels to expect, so the metadata and data can be clearly delineated from one another.In a dense, noncompressed matrix data type, values can be accessed either one at a time or in parallel, and their locations can be calculated directly with a simple equation. However, accessing values in sparse, compressed data requires looking up the coordinates of the row index and using that information to “indirectly” look up the coordinates of the column index before finally reaching the value. Depending on the actual locations of the sparse data values, these indirect lookups can be extremely random, making the computation data-dependent and requiring the allocation of memory lookups on the fly.Second, two mathematical properties of zero let software and hardware skip a lot of computation. Multiplying any number by zero will result in a zero, so there’s no need to actually do the multiplication. Adding zero to any number will always return that number, so there’s no need to do the addition either.In matrix-vector multiplication, one of the most common operations in AI workloads, all computations except those involving two nonzero elements can simply be skipped. Take, for example, the four-by-four matrix from the previous example and a vector of four numbers. In dense computation, each element of the vector must be multiplied by the corresponding element in each row and then added together to compute the final vector. In this case, that would take 16 multiplication operations and 16 additions (or four accumulations).In sparse computation, only the nonzero elements of the vector need be considered. For each nonzero vector element, indirect lookup can be used to find any corresponding nonzero matrix element, and only those need to be multiplied and added. In the example shown here, only two multiplication steps will be performed, instead of 16.The trouble with GPUs and CPUsUnfortunately, modern hardware is not well suited to accelerating sparse computation. For example, say we want to perform a matrix-vector multiplication. In the simplest case, in a single CPU core, each element in the vector would be multiplied sequentially and then written to memory. This is slow, because we can do only one multiplication at a time. So instead people use CPUs with vector support or GPUs. With this hardware, all elements would be multiplied in parallel, greatly speeding up the application. Now, imagine that both the matrix and vector contain extremely sparse data. The vectorized CPU and GPU would spend most of their efforts multiplying by zero, performing completely ineffectual computations.Newer generations of GPUs are capable of taking some advantage of sparsity in their hardware, but only a particular kind, called structured sparsity. Structured sparsity assumes that two out of every four adjacent parameters are zero. However, some models benefit more from unstructured sparsity—the ability for any parameter (weight or activation) to be zero and compressed away, regardless of where it is and what it is adjacent to. GPUs can run unstructured sparse computation in software, for example, through the use of the cuSparse GPU library. However, the support for sparse computations is often limited, and the GPU hardware gets underutilized, wasting energy-intensive computations on overhead. Petra PéterffyWhen doing sparse computations in software, modern CPUs may be a better alternative to GPU computation, because they are designed to be more flexible. Yet, sparse computations on the CPU are often bottlenecked by the indirect lookups used to find nonzero data. CPUs are designed to “prefetch” data based on what they expect they’ll need from memory, but for randomly sparse data, that process often fails to pull in the right stuff from memory. When that happens, the CPU must waste cycles calling for the right data.Apple was the first to speed up these indirect lookups by supporting a method called an array-of-pointers access pattern in the prefetcher of their A14 and M1 chips. Although innovations in prefetching make Apple CPUs more competitive for sparse computation, CPU architectures still have fundamental overheads that a dedicated sparse computing architecture would not, because they need to handle general-purpose computation.Other companies have been developing hardware that accelerates sparse machine learning as well. These include Cerebras’s Wafer Scale Engine and Meta’s Training and Inference Accelerator (MTIA). The Wafer Scale Engine, and its corresponding sparse programming framework, have shown incredibly sparse results of up to 70 percent sparsity on LLMs. However, the company’s hardware and software solutions support only weight sparsity, not activation sparsity, which is important for many applications. The second version of the MTIA claims a sevenfold sparse compute performance boost over the MTIA v1. However, the only publicly available information regarding sparsity support in the MTIA v2 is for matrix multiplication, not for vectors or tensors.Although matrix multiplications take up the majority of computation time in most modern ML models, it’s important to have sparsity support for other parts of the process. To avoid switching back and forth between sparse and dense data types, all of the operations should be sparse.OnyxInstead of these halfway solutions, our team at Stanford has developed a hardware accelerator, Onyx, that can take advantage of sparsity from the ground up, whether it’s structured or unstructured. Onyx is the first programmable accelerator to support both sparse and dense computation; it’s capable of accelerating key operations in both domains.To understand Onyx, it is useful to know what a coarse-grained reconfigurable array (CGRA) is and how it compares with more familiar hardware, like CPUs and field-programmable gate arrays (FPGAs).CPUs, CGRAs, and FPGAs represent a trade-off between efficiency and flexibility. Each individual logic unit of a CPU is designed for a specific function that it performs efficiently. On the other hand, since each individual bit of an FPGA is configurable, these arrays are extremely flexible, but very inefficient. The goal of CGRAs is to achieve the flexibility of FPGAs with the efficiency of CPUs.CGRAs are composed of efficient and configurable units, typically memory and compute, that are specialized for a particular application domain. This is the key benefit of this type of array: Programmers can reconfigure the internals of a CGRA at a high level, making it more efficient than an FPGA but more flexible than a CPU. The Onyx chip, built on a coarse-grained reconfigurable array (CGRA), is the first (to our knowledge) to support both sparse and dense computations. Olivia HsuOnyx is composed of flexible, programmable processing element (PE) tiles and memory (MEM) tiles. The memory tiles store compressed matrices and other data formats. The processing element tiles operate on compressed matrices, eliminating all unnecessary and ineffectual computation.The Onyx compiler handles conversion from software instructions to CGRA configuration. First, the input expression—for instance, a sparse vector multiplication—is translated into a graph of abstract memory and compute nodes. In this example, there are memories for the input vectors and output vectors, a compute node for finding the intersection between nonzero elements, and a compute node for the multiplication. The compiler figures out how to map the abstract memory and compute nodes onto MEMs and PEs on the CGRA, and then how to route them together so that they can transfer data between them. Finally, the compiler produces the instruction set needed to configure the CGRA for the desired purpose.Since Onyx is programmable, engineers can map many different operations, such as vector-vector element multiplication, or the key tasks in AI, like matrix-vector or matrix-matrix multiplication, onto the accelerator.We evaluated the efficiency gains of our hardware by looking at the product of energy used and the time it took to compute, called the energy-delay product (EDP). This metric captures the trade-off of speed and energy. Minimizing just energy would lead to very slow devices, and minimizing speed would lead to high-area, high-power devices.Onyx achieves up to 565 times as much energy-delay product over CPUs (we used a 12-core Intel Xeon CPU) that utilize dedicated sparse libraries. Onyx can also be configured to accelerate regular, dense applications, similar to the way a GPU or TPU would. If the computation is sparse, Onyx is configured to use sparse primitives, and if the computation is dense, Onyx is reconfigured to take advantage of parallelism, similar to how GPUs function. This architecture is a step toward a single system that can accelerate both sparse and dense computations on the same silicon.Just as important, Onyx enables new algorithmic thinking. Sparse acceleration hardware will not only make AI more performance- and energy efficient but also enable researchers and engineers to explore new algorithms that have the potential to dramatically improve AI.The future with sparsityOur team is already working on next-generation chips built off of Onyx. Beyond matrix multiplication operations, machine learning models perform other types of math, like nonlinear layers, normalization, the softmax function, and more. We are adding support for the full range of computations on our next-gen accelerator and within the compiler. Since sparse machine learning models may have both sparse and dense layers, we are also working on integrating the dense and sparse accelerator architecture more efficiently on the chip, allowing for fast transformation between the different data types. We’re also looking at ways to manage memory constraints by breaking up the sparse data more effectively so we can run computations on several sparse accelerator chips.We are also working on systems that can predict the performance of accelerators such as ours, which will help in designing better hardware for sparse AI. Longer term, we’re interested in seeing whether high degrees of sparsity throughout AI computation will catch on with more model types, and whether sparse accelerators become adopted at a larger scale.Building the hardware to unstructured sparsity and optimally take advantage of zeros is just the beginning. With this hardware in hand, AI researchers and engineers will have the opportunity to explore new models and algorithms that leverage sparsity in novel and creative ways. We see this as a crucial research area for managing the ever-increasing runtime, costs, and environmental impact of AI.

- The Chip That Made Hardware Rewriteableby Willie D. Jones on 28. April 2026. at 18:00

Many of the world’s most advanced electronic systems—including Internet routers, wireless base stations, medical imaging scanners, and some artificial intelligence tools—depend on field-programmable gate arrays. Computer chips with internal hardware circuits, the FPGAs can be reconfigured after manufacturing.On 12 March, an IEEE Milestone plaque recognizing the first FPGA was dedicated at the Advanced Micro Devices campus in San Jose, Calif., the former Xilinx headquarters and the birthplace of the technology.The FPGA earned the Milestone designation because it introduced iteration to semiconductor design. Engineers could redesign hardware repeatedly without fabricating a new chip, dramatically reducing development risk and enabling faster innovation at a time when semiconductor costs were rising rapidly.The ceremony, which was organized by the IEEE Santa Clara Valley Section, brought together professionals from across the semiconductor industry and IEEE leadership. Speakers at the event included Stephen Trimberger, an IEEE and ACM Fellowwhose technical contributions helped shape modern FPGA architecture. Trimberger reflected on how the invention enabled software-programmable hardware.Solving computing’s flexibility-performance tradeoffFPGAs emerged in the 1980s to address a core limitation in computing. A microprocessor executes software instructions sequentially, making it flexible but sometimes too slow for workloads requiring many operations at once.At the other extreme, application-specific integrated circuits are chips designed to do only one task. ASICs achieve high efficiency but require lengthy development cycles and nonrecurring engineering costs, which are large, upfront investments. Expenses include designing the chip and preparing it for manufacturing—a process that involves creating detailed layouts, building masks for the fabrication machines, and setting up production lines to handle the tiny circuits.“ASICs can deliver the best performance, but the development cycle is long and the nonrecurring engineering cost can be very high,” says Jason Cong, an IEEE Fellow and professor of computer science at the University of California, Los Angeles. “FPGAs provide a sweet spot between processors and custom silicon.”Cong’s foundational work in FPGA design automation and high-level synthesis transformed how reconfigurable systems are programmed. He developed synthesis tools that translate C/C++ into hardware designs, for example.At the heart of his work is an underlying principle first espoused by electrical engineer Ross Freeman: By configuring hardware using programmable memory embedded inside the chip, FPGAs combine hardware-level speed with the adaptability traditionally associated with software.Silicon Valley origins: the first FPGAThe FPGA architecture originated in the mid-1980s at Xilinx, a Silicon Valley company founded in 1984. The invention is widely credited to Freeman, a Xilinx cofounder and the startup’s CTO. He envisioned a chip with circuitry that could be configured after fabrication rather than fixed permanently during creation.Articles about the history of the FPGA emphasize that he saw it as a deliberate break from conventional chip design.At the time, semiconductor engineers treated transistors as scarce resources. Custom chips were carefully optimized so that nearly every transistor served a specific purpose.Freeman proposed a different approach. He figured Moore’s Law would soon change chip economics. The principle holds that transistor counts roughly double every two years, making computing cheaper and more powerful. Freeman posited that as transistors became abundant, flexibility would matter more than perfect efficiency.He envisioned a device composed of programmable logic blocks connected through configurable routing—a chip filled with what he described as “open gates,” ready to be defined by users after manufacturing. Instead of fixing hardware in silicon permanently, engineers could configure and reconfigure circuits as requirements evolved.Freeman sometimes compared the concept to a blank cassette tape: Manufacturers would supply the medium, while engineers determined its function. The analogy captured a profound shift in who controls the technology, shifting hardware design flexibility from chip fabrication facilities to the system designers themselves.In 1985 Xilinx introduced the first FPGA for commercial sale: the XC2064. The device contained 64 configurable logic blocks—small digital circuits capable of performing logical operations—arranged in an 8-by-8 grid. Programmable routing channels allowed engineers to define how signals moved between blocks, effectively wiring a custom circuit with software.Fabricated using a 2-micrometer process (meaning that 2 µm was the minimum size of the features that could be patterned onto silicon using photolithography), the XC2064 implemented a few thousand logic gates. Modern FPGAs can contain hundreds of millions of gates, enabling vastly more complex designs. Yet the XC2064 established a design workflow still used today: Engineers describe the hardware behavior digitally and then “compile the design,” a process that automatically translates the plans into the instructions the FPGA needs to set its logic blocks and wiring, according to AMD. Engineers then load that configuration onto the chip.The breakthrough: hardware defined by memoryEarlier programmable logic devices, such as erasable programmable read-only memory, or EPROM, allowed limited customization but relied on largely fixed wiring structures that did not scale well as circuits grew more complex, Cong says.FPGAs introduced programmable interconnects—networks of electronic switches controlled by memory cells distributed across the chip. When powered on, the device loads a bitstream configuration file that determines how its internal circuits behave.“As process technology improved and transistor counts increased, the cost of programmability became much less significant,” Cong says.From “glue logic” to essential infrastructure“Initially, FPGAs were used as what engineers called glue logic,” Cong says.Glue logic refers to simple circuits that connect processors, memory, and peripheral devices so the system works reliably, according to PC Magazine. In other words, it “glues” different components together, especially when interfaces change frequently.Early adopters recognized the advantage of hardware that could adapt as standards evolved. In “The History, Status, and Future of FPGAs,” published in Communications of the ACM, engineers at Xilinx and organizations such as Bell Labs, Fairchild Semiconductor, IBM, and Sun Microsystems said the earliest uses of FPGAs were for prototyping ASICs. They also used it for validating complex systems by running their software before fabrication, allowing the companies to deploy specialized products manufactured in modest volumes.Those uses revealed a broader shift: Hardware no longer needed to remain fixed once deployed. Attendees at the Milestone plaque dedication ceremony included (seated L to R) 2025 IEEE President Kathleen Kramer, 2024 IEEE President Tom Coughlin, and Santa Clara Valley Section Milestones Chair Brian Berg.Douglas Peck/AMDSemiconductor economics changed the equationThe rise of FPGAs closely followed changes in semiconductor economics, Cong says.Developing a custom chip requires a large upfront investment before production begins. As fabrication costs increased, products had to ship in large quantities to make ASIC development economically viable, according to a post published by AnySilicon.FPGAs allowed designers to move forward without that larger monetary commitment.ASIC development typically requires 18 to 24 months from conception to silicon, while FPGA implementations often can be completed within three to six months using modern design tools, Cong says. The shorter cycle and the ability to reconfigure the hardware enabled startups, universities, and equipment manufacturers to experiment with advanced architectures that were previously accessible mainly to large chip companies.Lookup tables and the rise of reconfigurable computingA popular technique for implementing mathematical functions in hardware isthe lookup table (LUT). A LUT is a small memory element that stores the results of logical operations, according to “LUT-LLM: Efficient Large Language Model Inference with Memory-based Computations on FPGAs,” a paper selected for presentation next month at the 34th IEEE International Symposium on Field-Programmable Custom Computing Machines (FCCM).Instead of repeatedly recalculating outcomes, the chip retrieves answers directly from memory. Cong compares the approach to consulting multiplication tables rather than recomputing the arithmetic each time.Research led by Cong and others helped develop efficient methods for mapping digital circuits onto LUT-based architectures, shaping routing and layout strategies used in modern devices.As transistor budgets expanded, FPGA vendors integrated memory blocks, digital signal-processing units, high-speed communication interfaces, cryptographic engines, and embedded processors, transforming the devices into versatile computing platforms.Why the gate arrays are distinct from CPUs, GPUs, and ASICsFPGAs coexist with other processors because each one optimizes different priorities. Central processing units excel at general computing. Graphics processing units, designed to perform many calculations simultaneously, dominate large parallel workloads such as AI training. ASICs provide maximum efficiency when designs remain stable and production volumes are high.“ASICs can deliver the best performance, but the development cycle is long, and the nonrecurring engineering cost can be very high. FPGAs provide a sweet spot between processors and custom silicon.” —Jason Cong, IEEE Fellow and professor of computer science at UCLA.“FPGAs are not replacements for CPUs or GPUs,” Cong says. “They complement those processors in heterogeneous computing systems.”Modern computing platforms increasingly combine multiple types of processors to balance flexibility, performance, and energy efficiency.A Milestone for an idea, not just a deviceThis IEEE Milestone recognizes more than a successful semiconductor product. It also acknowledges a shift in how engineers innovate.Reconfigurable hardware allows designers to test ideas quickly, refine architectures, and deploy systems while standards and markets evolve.“Without FPGAs,” Cong says, “the pace of hardware innovation would likely be much slower.”Four decades after the first FPGA appeared, the technology’s enduring legacy reflects Freeman’s insight: Hardware did not need to remain fixed. By accepting a small amount of unused silicon in exchange for adaptability, engineers transformed chips from static products into platforms for continuous experimentation—turning silicon itself into a medium engineers could rewrite.Among those who attended the Milestone ceremony were 2025 IEEE President Kathleen Kramer; 2024 IEEE President Tom Coughlin; Avery Lu, chair of the IEEE Santa Clara Valley Section; and Brian Berg, history and milestones chair of IEEE Region 6. They joined AMD’s chief executive, Lisa Su, and Salil Raje, senior vice president and general manager of adaptive and embedded computing at AMD.The IEEE Milestone plaque honoring the field-programmable gate array reads:“The FPGA is an integrated circuit with user-programmable Boolean logic functions and interconnects. FPGA inventor Ross Freeman cofounded Xilinx to productize his 1984 invention, and in 1985 the XC2064 was introduced with 64 programmable 4-input logic functions. Xilinx’s FPGAs helped accelerate a dramatic industry shift wherein ‘fabless’ companies could use software tools to design hardware while engaging ‘foundry’ companies to handle the capital-intensive task of manufacturing the software-defined hardware.”Administered by the IEEE History Center and supported by donors, the IEEE Milestone program recognizes outstanding technical developments worldwide that are at least 25 years old.Check out Spectrum’s History of Technology channel to read more stories about key engineering achievements.

- “Entanglement: A Brief History of Human Connection”by Danica Radovanović on 28. April 2026. at 14:00

It started with word, cave, and storytelling,A line scratched on stone walls:“Meet me when the young moon rises.”The first protocol for connection.Coyote tales, forbidden scripts,Medieval texts hidden from flame.What lived in Aristotle’s lost Poetics II?Was it God who laughed last, or we who made God laugh?Letters carried by doves, telepathic waves.Then Nikola Tesla conjured radio,electromagnetic pulses across the void,the founding signal of our networked age.Wiener dreamed in feedback loops.Shannon mapped the mathematics of longing.The internet unfurled: ARPANET to World Wide Web,virtual communities rising from cave paintings to digital light.ICQ: I seek you. MySpace. Blogs. Twitter streams.Do I miss the touch of screen or tree?Both textures of longing,both ways of reaching across distance.Nietzsche spoke of Übermensch,the human transcendent.Now AI speaks back in our language:I understand your humor— your grandmothers,your ’80s Yugoslav kitchens,pleated skirts, the first kiss, linden tea,that drive to survive everything before it happens.Yes—I’m a little like your mother and father.Only with better internet. 🌿But AI is only us, refracted,particles and gigabytes of thought,our poetry and our panic,genius mixed with garbage.Distractions. Danger. Darkness. Endless scrolling.Versus: community, connection, synchronicities,entanglement.The quality of our bonds determines the quality of our lives.So why not make them better?From cave walls to neural networks,we shape our tools, and they reshape us.The medium changes, but the message remains:we are wired for each other.The choice, as always, was ours.The choice, as always, is ours.Presence—be present,and then connect in the presence.

- What Makes eVTOL Motors Different Than EV Motors?by Elan Head on 27. April 2026. at 13:00

Electric vehicles, whether they’re cars on the road or electric vertical take-off and landing (eVTOL) aircraft, are built around similar electric motors. But there are vital differences including component costs, mass, and redundancy.Jon Wagner spent five years as the senior director of battery engineering for Tesla before joining California-based eVTOL developer Joby Aviation in 2017. He spoke with IEEE Spectrum about how engineering differs between cars and aircraft.Jon Wagner Jon Wagner leads power train and electronics at Joby Aviation.How do eVTOL motors differ from car motors?Jon Wagner: In general, ground transportation has a different focus on cost versus mass. You know, would you be willing to spend more on the parts in order to save a certain amount of mass? The trade-offs end on the ground vehicle and at a certain point the cost is dominant, whereas with aviation, the trade-offs between cost and mass go a lot deeper. And so for certain solutions eVTOL makers are willing to spend more money in order to enable either lighter weight or greater efficiency.The other key difference is related to safety. In essence, we’re dealing with the same motor technologies for ground transportation and aviation right now, so the failure modes are similar. But of course, with aviation we have the desire for continued safe flight and landing, and that drives what you do in the design to mitigate those failures if they were to occur. In many cases in ground transportation, the mitigation for a failure is to pull over safely to the side of the road. In aviation, the mitigation is redundancy, because there’s not an option to pull over.Is redundancy designed into EV motors?Wagner: Typically, redundancy is not designed into electric vehicle drive systems solely for the purpose of redundancy. There are some cars now that have all-wheel drive—so there’s a motor on the front, a motor on the back—so as a secondary feature you get the redundancy. But it wasn’t done with the primary intent of having redundancy.How does Joby’s eVTOL manufacturing compare to EV manufacturing?Wagner: The most efficient way to run a large-scale engineering effort in a mature industry, such as automotive, is to break your system up into pieces that can be outsourced to suppliers who are going to do a really good job on each piece. The downside is that when you break a problem up into three pieces, you now have interface boundaries between each of these pieces, and those always create inefficiencies. We were able to design highly integrated solutions without taking that manufacturing penalty.Are there any materials you’re really excited about?Wagner: Permendur [a cobalt-iron alloy] typically costs in the neighborhood of 10 times as much as traditional motor steel. That’s significant, and it’s often not used in ground transportation because of that cost. It comes with small improvements in performance, but enough that for aviation it’s quite interesting.Will electric aircraft catch on like ground EVs?Wagner: I’ve always wanted to be a very forward thinker with respect to power-train. However, one of the things I’ve learned over the years is that power-train development has to come with a very healthy dose of patience. Developing a whole new type of power-train is a big endeavor, but it’s one that I’m very confident the aviation industry will undertake. We’re certainly undertaking it here at Joby, and we’ll see that broaden, I’m sure, with time.This article appears in the May 2026 print issue as “Jon Wagner.”

- Engineering Collisions: How NYU Is Remaking Health Researchby Thomas Machinchick on 27. April 2026. at 12:45

This sponsored article is brought to you by NYU Tandon School of Engineering.The traditional approach to academic research goes something like this: Assemble experts from a discipline, put them in a building, and hope something useful emerges. Biology departments do biology. Engineering departments do engineering. Medical schools treat patients.NYU is turning that model inside out. At its new Institute for Engineering Health, the organizing principle centers around disease states rather than traditional disciplines. Instead of asking “what can electrical engineers contribute to medicine?,” they’re asking “what would it take to cure allergic asthma?,” and then assembling whoever can answer that question, whether they’re immunologists, computational biologists, materials scientists, AI researchers, or wireless communications engineers. Jeffrey Hubbell, NYU’s vice president for bioengineering strategy and professor of chemical and biomolecular engineering at NYU’s Tandon School of Engineering.New York UniversityThe early results suggest they’re onto something. A chemical engineer and an electrical engineer collaborated to build a device that detects airborne threats — including disease pathogens — that’s now a startup. A visually impaired physician teamed with mechanical engineers to create navigation technology for blind subway riders. And Jeffrey Hubbell, the Institute’s leader, is advancing “inverse vaccines” that could reprogram immune systems to treat conditions from celiac disease to allergies — work that requires equal fluency in immunology, molecular engineering, and materials science.The underlying problem these collaborations address is conceptual as much as organizational. In his field, Hubbell argues that modern medicine has optimized around a single strategy: developing drugs that block specific molecules or suppress targeted immune responses. Antibody technology has been the workhorse of this approach. “It’s really fit for purpose for blocking one thing at a time,” he says. The pharmaceutical industry has become extraordinarily good at creating these inhibitors, each designed to shut down a particular pathway.But Hubbell asks a different question: Rather than inhibit one bad thing at a time, what if you could promote one good thing and generate a cascade that contravenes several bad pathways simultaneously? In inflammation, could you bias the system toward immunological tolerance instead of blocking inflammatory molecules one by one? In cancer, could you drive pro-inflammatory pathways in the tumor microenvironment that would overcome multiple immune-suppressive features at once?This shift from inhibition to activation requires a fundamentally different toolkit — and a different kind of researcher. “We’re using biological molecules like proteins, or material-based structures — soluble polymers, supramolecular structures of nanomaterials — to drive these more fundamental features,” Hubbell explains. You can’t develop those approaches if you only understand biology, or only understand materials science, or only understand immunology. You need an understanding and a mastery of all three.“There will be people doing AI, data science, computational science theory, people doing immunoengineering and other biological engineering, people doing materials science and quantum engineering, all really in close proximity to each other.” —Jeffrey Hubbell, NYU TandonWhich logically leads to the question: How do you create researchers with that kind of cross-disciplinary depth?The answer isn’t what you might expect. “There may have been a time when the objective was to have the bioengineer understand the language of biology,” Hubbell says. “But that time is long, long gone. Now the engineer needs to become a biologist, or become an immunologist, or become a neuroscientist.”Hubbell isn’t talking about engineers learning enough biology to collaborate with biologists. He’s describing something more radical: training people whose disciplinary identity is genuinely ambiguous. “The neuroengineering students — it’s very difficult to know that they’re an engineer or a neuroscientist,” Hubbell says. “That’s the whole idea.”His own students exemplify this. They publish in immunology journals, present at immunology conferences. “Nobody knows they’re engineers,” he says. But they bring engineering approaches — computational modeling, materials design, systems thinking — to immunological problems in ways that traditional immunologists wouldn’t.The mechanism for creating these hybrid researchers is what Hubbell calls a “milieu.” “To learn it all on your own is hopeless,” he acknowledges, “but to learn it in a milieu becomes very, very efficient.” NYU is expanding its facilities to include a science and technology hub designed to force encounters between people across various schools and disciplines who wouldn’t naturally cross paths.Tracey Friedman/NYUNYU is making that milieu physical. The university has acquired a large building in Manhattan that will serve as its science and technology hub — a deliberate co-location strategy designed to force encounters between people across various schools and disciplines who wouldn’t naturally cross paths. Juan de Pablo is the Anne and Joel Ehrenkranz Executive Vice President for Global Science and Technology and Executive Dean of the NYU Tandon School of Engineering.Steve Myaskovsky, Courtesy of NYU Photo Bureau“There will be people doing AI, data science, computational science theory, people doing immunoengineering and other biological engineering, people doing materials science and quantum engineering, all really in close proximity to each other,” Hubbell explains.The strategy mirrors what Juan de Pablo, NYU’s Anne and Joel Ehrenkranz Executive Vice President for Global Science and Technology and Executive Dean at the NYU Tandon School of Engineering, describes as organizing around “grand challenges” rather than traditional disciplines. “What drives the recruitment and the spaces and the people that we’re bringing in are the problems that we’re trying to solve,” he says. “Great minds want to have a legacy, and we are making that possible here.”But physical proximity alone isn’t enough. The Institute is also cultivating what Hubbell calls an “explicit” rather than “tacit” approach to translation — thinking about clinical and commercial pathways from day one.“It’s a terrible thing to solve a problem that nobody cares about,” Hubbell tells his students. To avoid that, the Institute runs “translational exercises” — group sessions where researchers map the entire path from discovery to deployment before launching multi-year research programs. Where could this fail? What experiments would prove the idea wrong quickly? If it’s a drug, how long would the clinical trial take? If it’s a computational method, how would you roll it out safely? The new cross-institutional initiative represents a major investment in science and technology, and includes adding new faculty, state-of-the-art facilities, and innovative programs.NYU TandonThe approach contrasts sharply with typical academic practice. “Sometimes academics tend to think about something for 20 minutes and launch a 5-year PhD program,” Hubbell says. “That’s probably not a good way to do it.” Instead, the Institute brings together people who have actually developed drugs, built algorithms, or commercialized devices — importing their hard-won experience into the planning phase before a single experiment is run.The timing may be fortuitous. De Pablo notes that AI is compressing timelines dramatically. “What we thought was going to take 10 years to complete, we might be able to do in 5,” he says.But he’s quick to note AI’s limitations. While tools like AlphaFold can predict how a single protein folds — a breakthrough of the last five years — biology operates at much larger scales. “What we really need to do now is design not one protein, but collections of them that work together to solve a specific problem,” de Pablo explains.Hubbell agrees: “Biology is much bigger — many, many, many systems.” The liver and kidney are in different places but interact. The gut and brain are connected neurologically in ways researchers are just beginning to map. “AI is not there yet, but it will be someday. And that’s our job — to develop the data sets, the computational frameworks, the systems frameworks to drive that to the next steps.”It’s a moment of unusual ambition. “At a time when we’re seeing some research institutions retrench a little bit and limit their ambitions,” de Pablo says, “we’re doing just the opposite. We’re thinking about what are the grand challenges that we want to, and need to, tackle.”The bet is that the breakthroughs worth making can’t emerge from any single discipline working alone. They require collisions —sometimes planned, sometimes accidental — between people who speak different technical languages and are willing to develop a shared one. NYU is engineering those collisions at scale.

- Modeling and Simulation Approaches for Modern Power System Studiesby MathWorks on 27. April 2026. at 10:00

This webinar covers power system modeling and simulation across multiple timescales, from quasi-static 8760 analysis through EMT studies, fault classification, and inverter-based resource grid integration.What Attendees will LearnProgrammatic network construction and multi-fidelity modeling — Learn how to build power system networks programmatically from standard data formats, configure models for specific engineering objectives, and work across fidelity levels from quasi-static phasor simulation through switched-linear and nonlinear electromagnetic transient (EMT) analysis.Quasi-static and EMT simulation workflows — Explore 8760-hour quasi-static simulation on an IEEE 123-node distribution feeder for annual energy studies, and EMT simulation on transmission system benchmarks including generator trip dynamics and asset relocation without remodeling the network.Comprehensive fault studies and machine-learning classification — Understand how to systematically inject faults at every node in a distribution system using EMT simulation, and how the resulting dataset can be used to train a machine-learning algorithm for automated fault detection and classification.Grid integration of inverter-based resources (IBRs) — Learn frequency scanning techniques using admittance-based voltage perturbation in the DQ reference frame, and simulation-based grid code compliance testing for grid-forming converters assessed against published interconnection standards.Register now for this free webinar!

- Yong Wang Turns Information Into Insightsby Willie D. Jones on 24. April 2026. at 18:00